The growing use of artificial intelligence among teenagers has brought new attention to potential security and privacy concerns. Recent data indicates that two‑thirds of teens now use AI chatbots, and about one‑third of them interact with these systems every day. These numbers reflect the increasing role that AI tools are playing in how young people communicate, learn, and spend their time online.

AI chatbots provide fast access to information, answer questions, and assist with tasks such as learning or creative projects. They can help users find explanations for schoolwork, explore ideas, or engage in conversation. Their convenience and ease of use have contributed to the rapid adoption among teenagers, making these systems a common part of daily life for many young people.

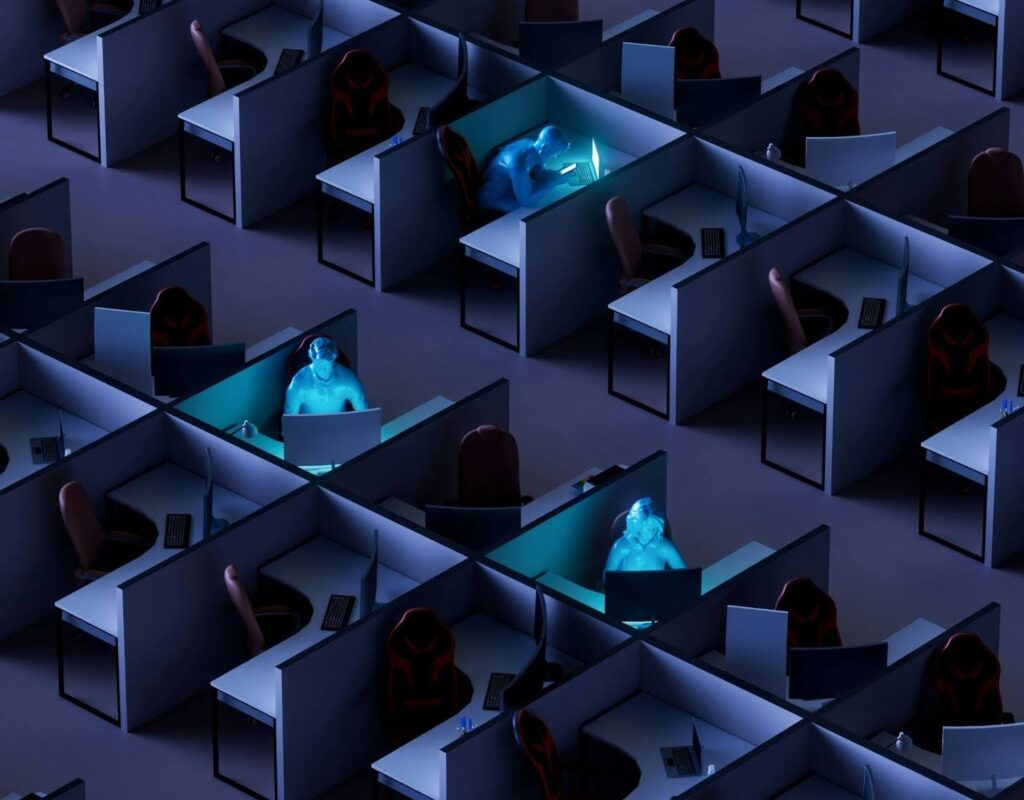

Despite these benefits, there are concerns about the security and privacy implications of widespread AI use. Chatbots process user inputs to generate responses, and some systems store interactions to improve future performance. Teenagers, in particular, may share personal information without fully understanding how it could be used or stored. This raises questions about the potential for data exposure, as well as the broader implications of storing sensitive information in digital systems.

Another area of concern is the accuracy and reliability of content generated by AI. While chatbots are trained on large datasets to provide informative responses, their outputs may sometimes be incorrect, misleading, or incomplete. Teens who rely on these systems for homework, advice, or guidance may not always be able to distinguish between accurate and inaccurate information, which can affect learning outcomes and decision-making.

The frequent use of AI by a significant portion of teenagers also introduces considerations around digital literacy. Engaging with AI requires an understanding of both the capabilities and limitations of these tools. Young users may benefit from guidance on how to interpret AI responses critically, recognize potential errors, and maintain appropriate boundaries when sharing personal information. Educators, parents, and caregivers play a role in helping teenagers develop these skills, ensuring that AI use remains safe and responsible.

Frank Palermo, COO of NewRocket, emphasizes the importance of learning to collaborate with AI systems. He says, “The next phase of AI adoption will be led by people who know how to work alongside intelligent systems, not hand work over to them entirely.” This perspective highlights the need for teenagers and other users to approach AI as a tool to augment human skills rather than a replacement for careful thought or decision-making.

At the same time, the rapid growth of AI adoption has prompted discussions about regulatory and industry responses. Technology companies that develop AI tools are increasingly investing in safety measures, such as content moderation, data privacy protections, and user guidance. However, there is ongoing debate about the extent to which these measures are sufficient, particularly for younger users who may be less aware of the potential risks associated with AI interactions.

Internationally, governments and policymakers are exploring approaches to improve AI safety and accountability. Proposals often focus on transparency in AI operations, restrictions on data collection, and measures to protect vulnerable users. As AI technology continues to evolve, there is recognition that securing these systems and educating users are both necessary components of a broader strategy to mitigate risks.

The widespread adoption of AI by teens illustrates a larger trend in society toward integrating AI tools into daily life. With two‑thirds of teenagers using chatbots and one‑third engaging with them every day, the technology is shaping how the next generation interacts with information and digital platforms. Ensuring that these interactions are safe, secure, and productive will require ongoing collaboration among technology developers, educators, policymakers, and families.

As AI becomes more prevalent, the focus on security and responsible use is likely to intensify. While these tools offer many opportunities for learning and engagement, understanding their limitations and potential risks is essential, particularly for younger users. Preparing teenagers to use AI safely and responsibly will be a critical part of navigating the technology-driven world of the future.